If you’re already using AI agents for development, you’ve probably felt this: the code works, but the codebase slowly gets harder to follow. Patterns drift. Something looks fine in review and turns out to have a bug two weeks later. The agent did what you asked – mostly – but not exactly how you would have done it.

This isn’t an AI problem. It’s a process problem. Most of the advice out there covers the basics – write a good CLAUDE.md, keep it short, plan before you code. This is about what we learned after the basics stopped being enough.

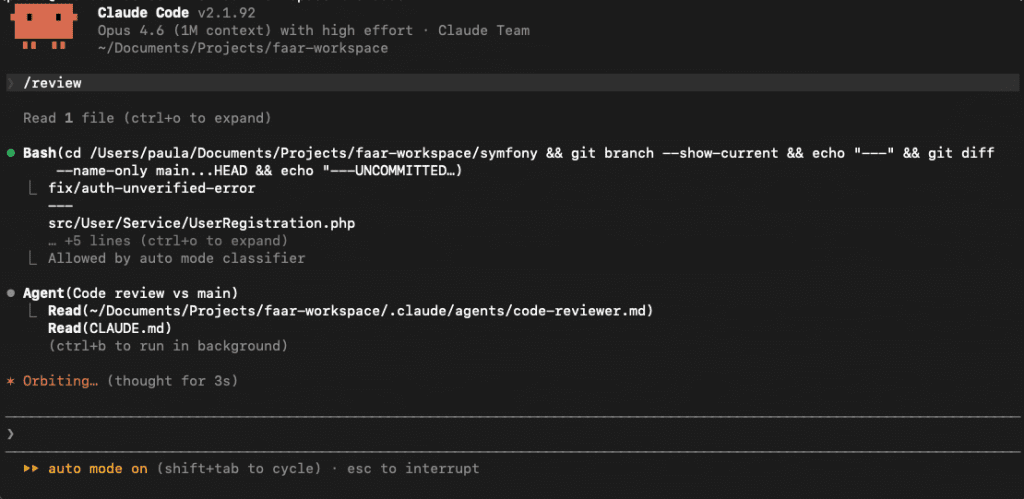

We use Claude Code, so the specifics below are about CLAUDE.md and review sub-agents. If you’re on Cursor or Copilot, the mechanisms differ but the process maps over – .cursorrules and copilot-instructions.md play the same role.

Why AI code loses quality

Most people describe what they want and let the AI figure out the rest. Describe the feature, get the code, review it, ship it.

The problem is that “review it” doesn’t work when you never defined what correct looks like.

Your review is only as good as your mental model of what should have been built. If you asked for “a check-in flow” and the agent built something that looks like a check-in flow, it passes. You didn’t specify the exact authorization rules or the error cases, so you won’t notice when the agent guessed wrong about them. Bugs accumulate quietly.

The agent isn’t being careless. It’s filling gaps with its best guess – patterns from general training, not your codebase. Wherever you leave something undefined, it makes a decision. And that decision might not be yours.

Start with a well-defined architecture

This isn’t a CLAUDE.md problem. It’s a team problem. Before you can write a single useful rule for the agent, the team needs to have made the decisions. How do modules communicate? How do you handle filtering? What goes in a repository and what doesn’t?

If the team works from vague principles – “keep things clean”, “use SOLID” – there’s nothing concrete to encode. The agent fills those gaps the same way a new developer would: by guessing. Except it guesses faster and across more files.

The level of specificity that works: “Modules communicate via Events only – never call another module’s services directly.” Or: “All filtering is declarative – use Parameter wrappers on operations. Never write custom providers for filtering.” These aren’t suggestions. They’re decisions. Every developer on the team follows them. The agent follows them too.

When you’ve defined your architecture at that level – folder structure, patterns per layer, which tool handles which job, what tests are required – encoding it for the agent is the easy part. CLAUDE.md becomes a transcript of decisions already made, not a place where you try to teach the agent how to code.

That covers the constant. But every feature still needs its own approach decided before anyone writes a line of code.

Brainstorm the approach before you plan anything

The first step in our workflow isn’t writing a plan. It’s figuring out what to build.

You describe the problem. The agent researches your codebase, reads existing patterns, and comes back with options – each with honest tradeoffs. Not a recommendation. Options.

For example: we needed Google OAuth. The options were frontend-provided token validation or server-side redirects. The agent laid out both – what each required, where each could break, how each fit our existing auth flow. We discussed, picked server-side redirects. That decision became one line of context in the feature plan. The plan itself took minutes to write because the thinking was already done.

Without the brainstorm, the agent would have picked one. Maybe the right one, maybe not. And when you reviewed the implementation, you’d be evaluating a decision you never made.

That’s the second reason this step matters: when you review the code, you’re reviewing your decision, not the agent’s. You know what was considered, what was rejected, what the edge cases are. Review becomes real instead of a gut check.

Write a plan before the agent writes code

Once the approach is decided, it gets written into a feature plan before a single line of implementation code is written.

A feature plan defines every file to create, every field, the implementation order, and numbered acceptance criteria. The agent works from this. It has no gaps to fill with guesses – it has a specific checklist to execute.

When it’s done, a separate review agent verifies the implementation against those criteria. Not against a vague memory of what was asked for. Against specific, numbered items. Did it handle the case where the booking is already checked in? The plan said it should. Did it?

This is why review actually works. You can’t verify against a standard you never set.

CLAUDE.md: treat it as a living document, not a config file

There’s good general guidance for writing CLAUDE.md – keep it short, only document deviations from defaults, cut anything the agent can figure out by reading your code. Follow that. But what made the real difference for us was how we maintain it over time.

CLAUDE.md doesn’t start good. It gets good through iteration – and the iteration follows a specific loop.

When the agent does something architecturally wrong, don’t fix the code silently. Ask why it made that choice. The reasoning tells you exactly what rule is missing. Then add it.

Our agent once put business logic directly in a processor. Instead of refactoring it silently: asked why, understood the reasoning, added a rule – thin processors, single responsibility classes for any actual work. Every processor since has been clean.

When you fix the code, you fix one file. When you add the rule, you fix every file the agent will ever write.

Over time, CLAUDE.md stops being generic and becomes genuinely specific to your project – not from planning sessions, but from real violations caught in real code, understood, and encoded.

Review agents with fresh context

Even a good CLAUDE.md has limits. When an agent is deep in a complex task – long session, many files – context fills up. Rules compete for attention with everything else. Sometimes they lose.

The fix: review agents that run in fresh sessions, separate from the implementation.

When the implementer finishes, multiple review agents run. One checks whether CLAUDE.md rules were followed. One verifies acceptance criteria. Each gathers its own context – runs the git diff, reads CLAUDE.md itself. Neither receives anything from the implementation session.

Clean context finds different things. It’s the same reason you spot the obvious bug after a break that you missed after hours of grinding. A fresh session sees the code as it is, not as the implementer intended it to be.

Quality is a process

None of this was set up in one go. Every step we follow today exists because something went wrong and we noticed.

An agent ignored an architectural decision – we made our rules more precise. A review missed something – we added a review agent for it. A feature had bugs because we didn’t plan it well enough – we added brainstorming before planning. Each time something wasn’t done the way we imagined, the process got a little tighter.

That’s the real habit: not just following a workflow, but improving it every time it lets something through.

A capable AI doesn’t guarantee code quality. Your process does.